Is Microsoft customer service good? 2020 rating

Big improvements, but still no Apple

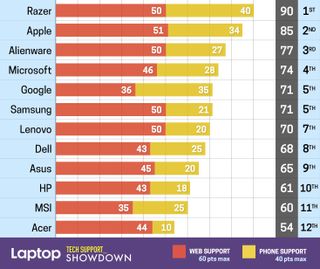

Microsoft put in a mediocre showing last year, falling short of the support provided by archnemesis Apple. This year is a little different, thanks to improvements made to Microsoft's online resources and phone support. Is it enough to dethrone Apple? No, but Microsoft's support is moving in the right direction and getting closer to matching the quality of its premium products.

Microsoft tech support

| Overall | Web Score | Phone Score | Avg. Call Time | Phone Number | Web Support |

| 74/100 | 46/60 | 28/40 | 30:04 | 1-800-642-7676 | Link |

I tested Microsoft's tech support by borrowing a Surface Go and asking three questions over the phone, two on social media and one on the company's live chat. I asked the support agents about disabling the webcam, remapping buttons on the Surface Pen and downloading the new Edge browser.

Microsoft's support team provided accurate answers, but those responses didn't arrive as fast as I had hoped. I'd also like to see Microsoft expand its database of support articles and offer more generous warranty policies and prices.

Web and social support

Microsoft's website is easy to navigate thanks to its large interfaces and high-resolution images. It provides a ton of information to sift through, from how-tos to warranty documents and the active community forums.

Microsoft has hundreds of support documents covering a wide range of issues, and it presents them front and center on its website. There is also a Microsoft Helps YouTube account with 150 uploads (up from about 100 last year) that gives step-by-step instructions for important actions like checking for updates. If you don't want to watch videos or search through documents, then scroll down to visit the community forums or the Contact Us page.

I quickly got results for my questions about the Surface Pen and downloading the new Edge browser from Microsoft's support hub, but I needed help from the forum to figure out how to disable my Surface Go's front-facing camera.

If you don't want to talk to a human, you can try direct-messaging a virtual assistant through the Answer Desk (there is a separate option for people with disabilities). I had mixed results with the virtual assistant. When I asked about adjusting Surface Pen shortcuts, the chatbot showed me several related topics, but they were too specific and left out the correct answer.

Stay in the know with Laptop Mag

Get our in-depth reviews, helpful tips, great deals, and the biggest news stories delivered to your inbox.

When the virtual helper ran out of ideas, it handed me over to Judy Anne, who entered the chat within seconds at 11:45 p.m. on a Monday. I asked about remapping the buttons on my Surface Pen, and Judy immediately recommended that she take remote access of my Surface Go. I complied (despite it being a fairly simple task), and she sent straightforward steps on how to grant her control. Once connected, Judy entered the Pen & Windows Ink settings and, after some fiddling, got me to the correct drop-down menus, where I could adjust those settings to my liking.

The exchange felt impersonal to start, but Judy warmed up, with a strange but endearing quirkiness. By the end, I was pretty happy with the experience.

Microsoft's social media support got the job done, although it wasn't the fastest to respond to my questions. I asked @MicrosoftHelps how to download the new Microsoft Edge browser and received a thorough reply with the appropriate link in 43 minutes. Microsoft's Twitter team even sent a personalized follow-up message asking how the installation went.

Facebook Messenger support was even slower. My question about disabling the Surface Go's webcam was answered 4 hours and 22 minutes after I sent the query to the Microsoft Surface Facebook account (which isn't clearly labeled a support account). Microsoft's reply consisted of a link to a list of ways you can prevent certain apps from accessing your webcam. The page did not include instructions on disabling the camera altogether.

Phone support

If you'd prefer to speak to a human, try the phone; the easiest way to reach Microsoft's phone agents is by calling the general 24/7 support line at 1-800-642-7676. Microsoft has 10 worldwide call centers, in the U.S., Latin America, China and Europe. You'll first need to speak with the automated phone system, which will redirect your call to a human service agent. There weren't any prompts to lead me in the right direction, but telling the automated voice "Surface" got me to the correct agent.

My first call was conducted at 6:17 p.m. on a Friday. Within 5 minutes of calling, I was getting help from Anne on how to download the new Edge browser. The phone agent first asked for the email associated with my account so she could verify, via a six-digit code, that I was the owner of the Surface Go.

Rather annoyingly, you can't download the new Edge browser unless you're running the latest version of Windows. So Anne checked the version of Windows 10 on my Surface Go by entering "winver" in the command prompt. The laptop was a few versions behind, but Anne patiently waited while the system updated.

Once that was completed, she told me the web page I needed to visit in order to download the new Edge. The agent was friendly and helpful, and while this question is somewhat of a softball toss to Microsoft, Anne knocked it out of the park. The call took 17 minutes and 19 seconds, which isn't too bad for a correct answer.

The second call was also a success, although it took the better part of an hour (54 minutes and 29 seconds). I rang up Microsoft's support at 5:26 p.m. on a Saturday and was warned that wait times could be lengthy. I chose to wait on the line instead of setting up a call (a great option to have). Juan joined the call 27 minutes later. Fortunately, his good nature and helpfulness improved the cranky mood I was in after listening to elevator music for so long.

Once he'd verified my account, Juan ensured that I really wanted to disable the front-facing webcam on my Surface Go. I'm glad he wanted to get this right, because miscommunication could have caused a lot of frustration. Juan didn't have the answer first hand but, after putting me briefly on hold, he came back with a dictionary of knowledge. Juan told me to shut off my Surface Go then he walked me through the steps of entering the BIOS (hold volume up and power).

Once in the BIOS, Juan specified exactly where I needed to go and what steps to take to disable the webcam. The process was simple, and Juan, who was patient throughout the activity and even casually asked how my day was going, verified that my camera was disabled by quickly taking control of the device via a remote session and opening the camera app. He then recommended the Tips and Quick Assist apps before wishing me a good day. Juan told me he was excited to take the next day off — he certainly deserves it.

My final call was at 3 p.m. on a Monday. This time, the virtual operator failed to find my product when I provided my serial number, which I was asked to give again when a human finally answered. This time, I spoke to Javiera, who was extremely friendly and, more or less, got me where I needed to go. When I asked about changing the shortcuts on my Surface Pen, Javiera told me to go into Settings and to press Pen and Windows Ink. When I said Pen and Windows Ink wasn't an option on the main Settings page, Javiera seemed stumped. I gave her a hand by searching for the term and landed in the Pen settings, where I could adjust button shortcuts.

Javiera was infectiously friendly, but her instructions weren't very detailed and she seemed to be following a prompt that could use some updating. This last call took 18 minutes and 20 seconds.

Warranty

Surface laptops and tablets ship with a one-year limited warranty. Customers in select markets can purchase an extended two-year protection plan called Microsoft Complete. Perks of that upgraded plan include hardware and accidental-damage coverage (up to two claims), software support for Windows and Office, and Microsoft Store benefits. You can purchase Microsoft Complete at checkout or up to 45 days after purchase. Pricing depends on the device; Complete costs $99 for our Surface Go but goes up to $249 for a Surface Book.

Manually upgrading the RAM or storage of a Surface device voids its warranty, even though the latest model, the Surface Laptop 3, was designed with an easy-access SSD slot.

Bottom line

Microsoft has one of the best support sites on the web, now featuring an expanded database of documents, videos and forum posts for answering popular troubleshooting queries. And while the social media and phone support teams were slow to respond, Microsoft's agents were courteous and usually gave us accurate answers. Not to mention, the expanding Microsoft retail stores provide face-to-face assistance. There is still room for improvement, but Microsoft put in a good showing for 2020.

Phillip Tracy is the assistant managing editor at Laptop Mag where he reviews laptops, phones and other gadgets while covering the latest industry news. After graduating with a journalism degree from the University of Texas at Austin, Phillip became a tech reporter at the Daily Dot. There, he wrote reviews for a range of gadgets and covered everything from social media trends to cybersecurity. Prior to that, he wrote for RCR Wireless News covering 5G and IoT. When he's not tinkering with devices, you can find Phillip playing video games, reading, traveling or watching soccer.