News

Latest News

New Windows 11 update injects ads in your Start menu — here's how to turn them off

By Momo Tabari published

Windows 11 released a software update yesterday that places advertisements on the Start menu, with the changes gradually rolling out to users throughout the week. Here's how to turn them off.

Best tablet deals in April 2024

By Hilda Scott last updated

The best tablet deals from a variety of retailers. Save big on today's best tablet PCs.

Click no more? iPhone 16 is rumored to have no physical buttons

By Mark Anthony Ramirez last updated

After years of rumors and speculation, it seems Apple might finally be ditching those clicky buttons for a sleeker, more modern approach.

Dynabook's new featherlight business laptop may challenge the best from Lenovo, Dell

By Madeline Ricchiuto published

Dynabook has unveiled the new Portégé X40L-M, which isn't as pricey as it seems when you stack it up against the competition.

Best iPad deals of April 2024: Save up to $100

By Hilda Scott last updated

See today's best iPad deals from the best places to buy an iPad

Best cheap Nintendo Switch game deals of April 2024

By Hilda Scott last updated

The best cheap Nintendo Switch game deals right now. Save on digital and physical Nintendo Switch games.

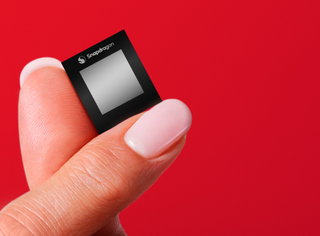

Qualcomm reveals the Snapdragon X Plus along with three X Elite chipsets

By Madeline Ricchiuto published

Qualcomm has confirmed the Snapdragon X Plus and three distinct Snapdragon X Elite SKUs, giving us a total of four new ARM chips.

Apple’s new iPads bore me, and I’m tired of pretending otherwise

By Rael Hornby published

Apple has new iPads on the way, but my enthusiasm is long gone.

My favorite Star Wars game of all time will be free to play on Game Pass soon — it's still the best deal in gaming

By Rami Tabari published

Star Wars Jedi: Survivor retails for $69, but you can get 1 month of Game Pass Ultimate subscription for $11.29. That and more is why Game Pass is still the best deal in gaming.

Microsoft's new lightweight AI rivals GPT-3.5 — it's learning from children's books and fits on phones

By Sarah Chaney published

Large language models (LLMs) are still necessary for more complex tasks, but your next phone could sport this new compact AI model from Microsoft: the Phi-3 Mini.

Stay in the know with Laptop Mag

Get our in-depth reviews, helpful tips, great deals, and the biggest news stories delivered to your inbox.